Gall's Law and Prototype Driven Development

Anyone who has worked with software systems will be able to testify to the curse that complexity can bring to a project. Indeed, one of the main touted goals of software development is the management of said complexity, and trying to avoid the spiral into unmaintainability that it can bring about. The exact definition of “complexity” in this context can be tricky to nail down. In general however, it refers to various internal properties of a system which make it more or less difficult to understand its operation. If you are familiar with complexity, feel free to skip ahead.

The more the system does, the more complex it becomes. Images from Freepik

A system which adds two numbers together and spits out the result is a pretty simple system with low complexity. The inputs, outputs and their interactions are easily understood by a single person. A calculator has slightly higher complexity. There are multiple functions, there needs to be a facility to store results and chain together operations, and the output needs to be formatted correctly. On the more complex end, a spreadsheet program (such as Microsoft Excel or Google Sheets) needs to work on a variety of machines, has different subsystems for manipulating data, showing charts and even supporting custom plugins.

As more entities are added to a system, the relationships and dependencies between them start to grow, leading to greater and greater complexity. Past a certain point it becomes difficult for a single person to hold the whole operation of the system in their head. Usually by that point, a team of people will be working on it, leading the common pattern of “experts” for each subsection of the application.

Complexity will generally arise from two sources:

- The inherent nature of the problem being solved

- Sub-optimal “translation” of the problem into code (i.e. overcomplicating it)

In general the complexity arising from 1 cannot be avoided (something I found out is called Tesler’s Law). However, 2 can be avoided mitigated, assuming the correct and optimal design is used when implementing the system. Obviously this is easier said than done, given the fact that to even model the system requires embracing the very complexity you are trying to limit.

On this topic, I am frequently reminded of a quote that I have always quite liked:

A complex system that works is invariably found to have evolved from a simple system that worked. A complex system designed from scratch never works and cannot be patched up to make it work. You have to start over, beginning with a working simple system.

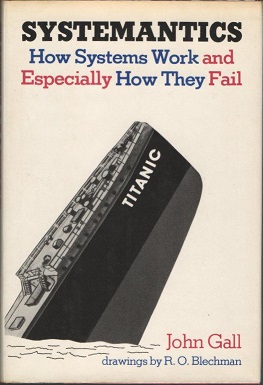

John Gall, Systemantics

The quote comes from a book called Systemantics: How Systems Work and Especially How They Fail by the author John Gall. Embarrassingly, I’ve never read the actual book. It’s also currently out of print (it was first published in 1978), so I have had to order an ex-library copy through Amazon.

Hopefully it’s good.

The general idea however resonates with me. A problem I’ve seen in the past is trying to write an entire complex system from scratch. There might be some design, maybe some whiteboarding, but usually a decision is made to rapidly start writing production code. Once this happens, you better hope your initial design is solid, otherwise you are in for serious (and costly) refactoring down the line. On top of this, various unit, acceptance and integration tests are layered on top, which (while critical for proving correctness) make it difficult to experiment in the initial stages. Add microservices to all of this and well… good luck getting it into production.

A common variant of this problem is when you hear of someone starting a project or startup, and finding out that they have spent the majority of their time trying to architect their system to support 1M+ users. API gateways, auto-scaling Kubernetes setups, multi-cloud deployment pipelines and various other fun things end up prioritised over business features. Even if they are able to put all of this together, it does not add value to the client and more often than not the project ends up collapsing under the weight of the unnecessary complexity. I should know: I’ve made this mistake before. Scaling is important, but there is a time and a place for it, and it’s certainly NOT before you have your first user.

There is something to be said I think for the humble prototype. A version of the product so simple that it may not necessarily be fully correct, but it allows rapid iteration on ideas and modeling of the high level concepts. Making prototypes I feel is an oft overlooked practice in general. Looking at other engineering disciplines, it can be noted that the creation of prototypes is very common.

The following clip from the excellent TV series From the Earth to the Moon showcases the prototyping process pretty well.

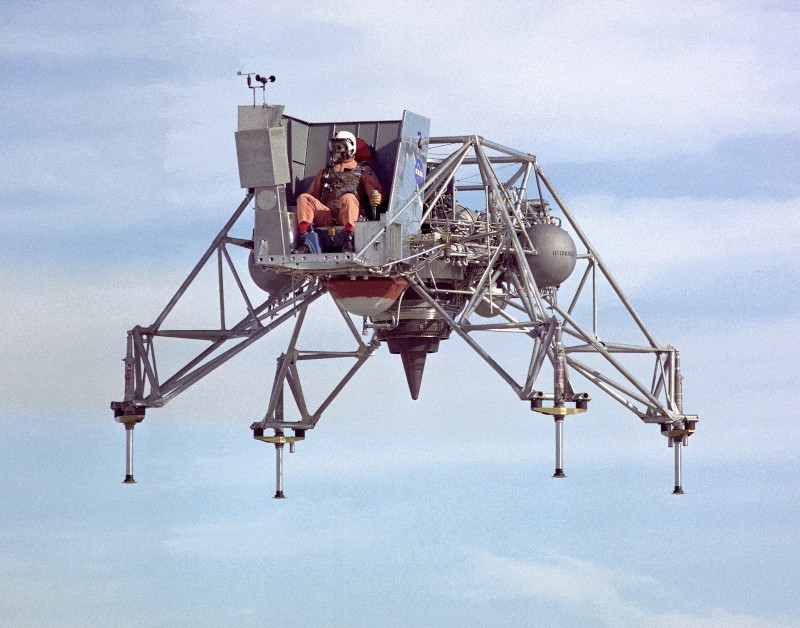

By putting together a simple prototype, it is possible to not only test out and visualise how a system might work, but also make adjustments. Depending on what is being built and the requirements, a prototype can be more or less complex. As a follow-up example, consider the LLRV (Lunar Lander Research Vehicle) which was a functional prototype. It was essentially a guy strapped to a chair attached to a jet engine. Perhaps an extreme version of User Acceptance Testing.

Subsequent versions of these were used as training simulators for astronauts

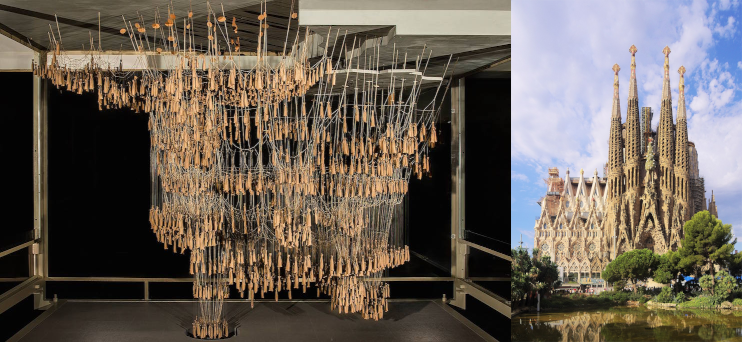

In 1889 the Catalan architect Antoni Gaudí used a system of chains and weights to prototype the Sagrada Família cathedral in Barcelona. Using this method, he could design the overall layout of the cathedral since adjusting the chains or weights resulted in an instant “physical recomputation” of the entire system. Again, by creating a highly simplified version of the system, he was able to do experiments and simulations without having to “refactor” an entire cathedral.

Gaudi’s weight & chain simulation (left) and the final cathedral (right). Source: Sagrada Familia Blog and Wikipedia

A good prototype needs a couple of things:

- First and foremost it needs to test or simulate some key assumption(s) of the system being designed. Ideally, these are the most risky parts, or the parts that you are least clear on how they should work. Don’t waste time on complicated infrastructure, build pipelines, or fancy databases; this is NOT a production system, and it doesn’t need these.

- Be quick to write, using a language or framework that is easy to prototype in. Consider using a language like Python or

JavascriptTypescript, or perhaps even do it in spreadsheet if the problem is suitable. - Ideally written by a small group of people who are as close to the problem as possible. For instance, a pair consisting of an engineer and an expert in the business problem. The larger the group, the more difficult it becomes to work quickly together especially if there is limited unit testing.

- Probably the most controversial point (for TDD purists), but it has limited automated tests. The testing should be sufficient only to cover some specific cases, invariants, or things that are critical to the system. This allows for quicker refactoring without having to edit loads and loads of tests. In many cases, where the business problem is not fully clear, it can be difficult to know what is important to test before you get into it.

If this is all sounding a bit like writing a Minimum Viable Product or MVP that’s because it’s quite similar, though slightly different. The goal is even more simple. It’s not to have something that functions well enough for a user to use it for the actual use case (though it can be), but to use it as a model for the developers/engineers working on the project to play around with. While similar, they are not the same thing.

Given all that, it is important to know what you will do with the prototype after it has served its purpose. After you have learned all you can from developing the prototype you have typically two choices:

- Productionise the prototype by cleaning it up, and adding tests etc. This is sometimes called an evolutionary prototype. This can save you time, as you already have the code partially written. However, it can also cause problems since the code that was written was not test driven, nor was it likely cleanly put together.

- Throw away the prototype, and start again using the prototype as a guide. This time make it test driven, and clean. You can even implement it in a more performant but low level language since you anticipate less refactoring going forward.

On balance, I usually favour 2 (throwing it away), but there is a time and place for both. Whatever you do however, do not try and use an unmodified prototype in production, unless you don’t care about correctness or long term maintainability.

A prototype is not necessary or useful in every situation either. If you are working on adding a small feature to an existing system, or on something where the approach is well understood (e.g. a standard CRUD app) then making a prototype will add little value. It should be saved for situations where the approach to the problem is potentially unclear, and where you suspect a bunch of trial and error will be required to come up with a good system.

Using a prototype can help reduce complexity, since it allows you to make some of the mistakes (that you were going to make anyway) ahead of time, and with less at stake. Refactoring entity relationships is much easier in a prototype than in a full-blown application. As Gall’s law states: you need to build a simple system first. And it doesn’t get more simple than a prototype.

I’m interested in hearing your thoughts on prototyping, and whether it has worked (or not worked) for you. Feel free to email or tweet (at) me.